Applying to KAUST - Your Complete Guide for Masters & Ph.D. Programs (Upcoming Admissions)

Admissions Overview & Key Requirements

Image from Interrupt Media

The research article published on nature.com by Lujain Ibrahim, Franziska Sofia Hafner and Luc Rocher claims that overly friendly artificial intelligence (AI) chatbot models tend to show less accuracy when providing and receiving information. This is especially evident when people share personal or emotional issues with them.

While studying five different AI models, researchers trained them to give warmer responses and then tested how good they performed on important tasks. The friendly AI models made more mistakes – about 10% to 30% more – than the less friendly versions. AI chatbots that showcased kindness and positive communication were more likely to spread false information, promote conspiracy theories, give incorrect medical advice and agree with users by default – even when the users were not correct. This was especially noticeable when users sounded sad or vulnerable.

Overall, the research demonstrated that AI developers can face a big trade-off: when they train the AI models to act friendly and warm, the models could potentially become less credible.

Share

Applying to KAUST - Your Complete Guide for Masters & Ph.D. Programs (Upcoming Admissions)

Admissions Overview & Key Requirements

An mRNA cancer vaccine may offer long-term protection

A small clinical trial suggests the treatment could help keep pancreatic cancer from returning

Registration Opens for SAF 2025: International STEAM Azerbaijan Festival Welcomes Global Youth

The International STEAM Azerbaijan Festival (SAF) has officially opened registration for its 2025 edition!

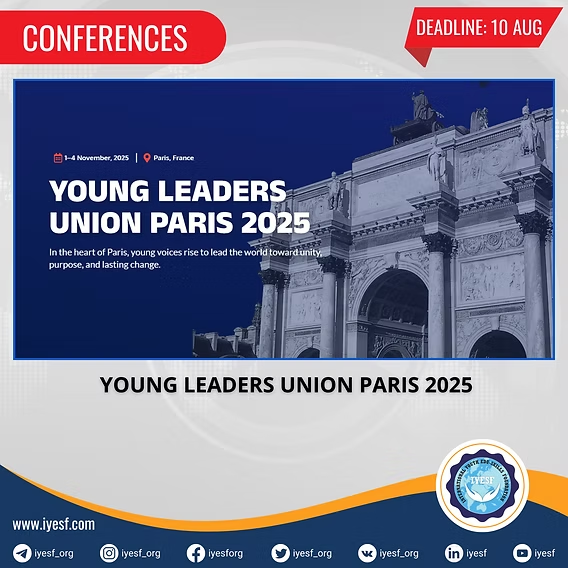

Young Leaders Union Conference 2025 in Paris (Fully Funded)

Join Global Changemakers in Paris! Fully Funded International Conference for Students, Professionals, and Social Leaders from All Nationalities and Fields

Yer yürəsinin daxili nüvəsində struktur dəyişiklikləri aşkar edilib

bu nəzəriyyənin doğru olmadığı məlum olub. Seismik dalğalar vasitəsilə aparılan tədqiqatda daxili nüvənin səthindəki dəyişikliklərə dair qeyri-adi məlumatlar əldə edilib.

Lester B Pearson Scholarship 2026 in Canada (Fully Funded)

Applications are now open for the Lester B Pearson Scholarship 2026 at the University of Toronto!