Applying to KAUST - Your Complete Guide for Masters & Ph.D. Programs (Upcoming Admissions)

Admissions Overview & Key Requirements

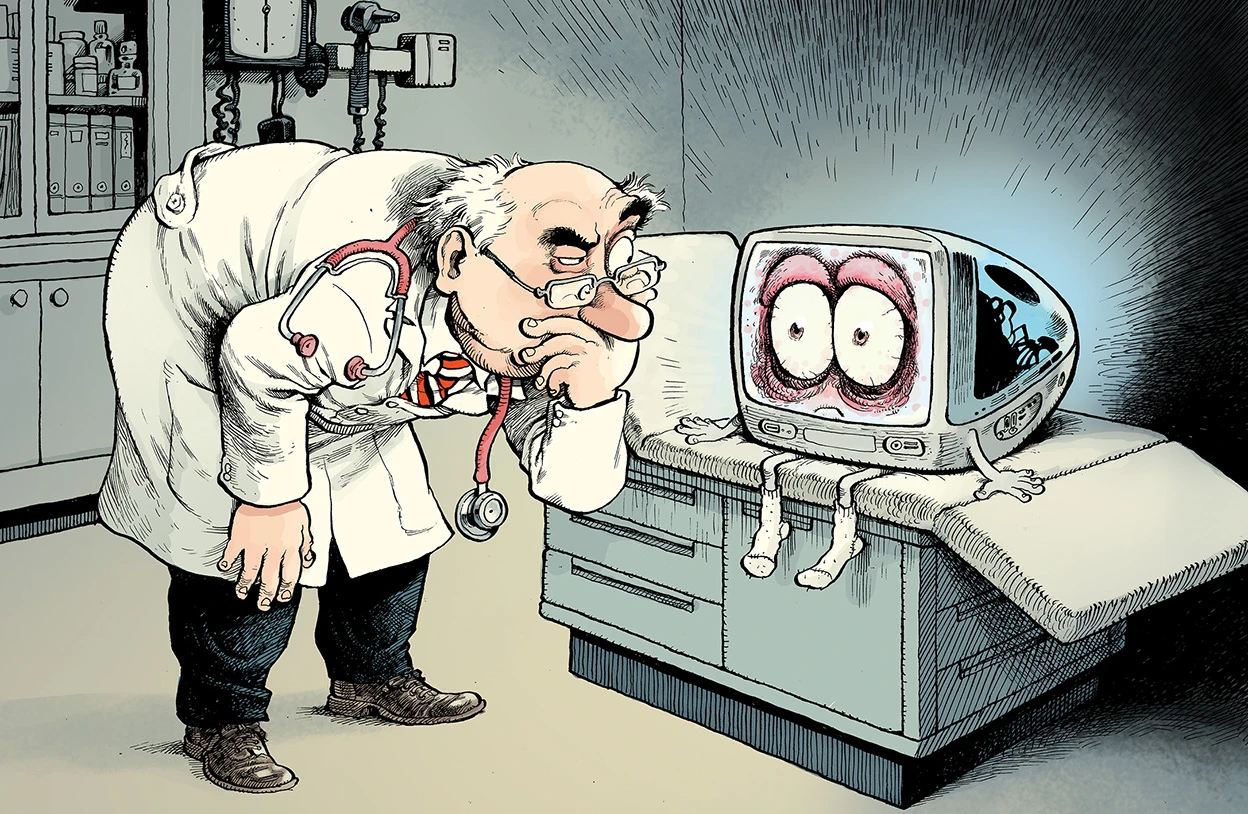

David Parkins / File: Nature

A made-up medical condition has revealed how easily artificial intelligence and even researchers can be fooled by convincing misinformation.

The fake illness, called “bixonimania,” was created in 2024 by Almira Osmanovic Thunström as part of an experiment. She published fake studies online to see if AI chatbots would treat the condition as real. They did.

Within weeks, several major AI systems were describing bixonimania as a genuine eye condition, linking it to screen use and even suggesting people seek medical advice.

The experiment worked so well that the false information didn’t just stay in AI systems. In one case, it was cited in a real academic paper, which was later retracted.

Experts say this highlights a serious issue: AI tools don’t actually verify facts in the way a human researcher or clinician would. Instead, they generate responses by predicting likely text based on patterns in the data they were trained on. Because of that, information that is written in a formal, authoritative style, such as academic papers, medical reports, or clinical language can be treated as more trustworthy than it really is. As a result, if false or misleading claims are packaged in that kind of format, AI systems may repeat them confidently, without any built-in understanding of whether they are true or not.

“This is how misinformation spreads,” said Alex Ruani, warning that both AI and humans can be misled if sources aren’t carefully checked.

The fake condition may not be real, but the problem it exposed is.

Share

Applying to KAUST - Your Complete Guide for Masters & Ph.D. Programs (Upcoming Admissions)

Admissions Overview & Key Requirements

An mRNA cancer vaccine may offer long-term protection

A small clinical trial suggests the treatment could help keep pancreatic cancer from returning

Registration Opens for SAF 2025: International STEAM Azerbaijan Festival Welcomes Global Youth

The International STEAM Azerbaijan Festival (SAF) has officially opened registration for its 2025 edition!

Young Leaders Union Conference 2025 in Paris (Fully Funded)

Join Global Changemakers in Paris! Fully Funded International Conference for Students, Professionals, and Social Leaders from All Nationalities and Fields

Yer yürəsinin daxili nüvəsində struktur dəyişiklikləri aşkar edilib

bu nəzəriyyənin doğru olmadığı məlum olub. Seismik dalğalar vasitəsilə aparılan tədqiqatda daxili nüvənin səthindəki dəyişikliklərə dair qeyri-adi məlumatlar əldə edilib.

Lester B Pearson Scholarship 2026 in Canada (Fully Funded)

Applications are now open for the Lester B Pearson Scholarship 2026 at the University of Toronto!