Applying to KAUST - Your Complete Guide for Masters & Ph.D. Programs (Upcoming Admissions)

Admissions Overview & Key Requirements

The Guardian reported that, in Putnam County, Florida, Interlachen Jr.-Sr. High School has used the AI platform Alongside for three years to help assess students’ mental health needs, citing budget cuts and limited counseling staff. The district is part of a growing national trend: since 2022, at least nine companies have secured funding to sell similar AI-driven monitoring tools to K-12 schools.

Alongside says it is now used in more than 200 U.S. schools and offers services beyond typical telehealth options. Its platform includes a social-emotional learning chatbot featuring “Kiwi,” a llama that engages students in conversations about life challenges and resilience-building. Company representatives say AI-generated content is monitored by clinicians and that the system expands access to mental health resources, particularly in rural communities.

Artificial intelligence has become a central component of the Trump administration’s national education agenda. However, some parents, teachers, and lawmakers are increasingly concerned about teenagers’ growing screen time. Several states have begun limiting the use of AI in telehealth settings.

Experts also warn about students forming emotional attachments to chatbots. A recent national survey found that 20% of high school students have used AI for romantic purposes or know someone who has. Federal legislation now requires AI companies to remind students that chatbots are not real people, reflecting broader concern about emotional dependency.

School counselors say student anxiety may partly explain why teens feel comfortable using these tools. Sarah Caliboso-Soto, a licensed clinical social worker and assistant director of clinical programs at the University of Southern California’s Suzanne Dworak-Peck School of Social Work, said speaking with a mental health professional can feel intimidating for adolescents. For students raised on messaging platforms and social media, AI chat interfaces may feel more familiar and less judgmental.

Linda Charmaraman, director of the Youth, Media & Wellbeing Research Lab at the Wellesley Centers for Women, noted that many young people find texting easier than making phone calls. AI tools allow students to process emotions without worrying about facial expressions or immediate judgment, and they are available without the need to schedule appointments.

Caliboso-Soto emphasized that AI can serve as a “first line of defense” in under-resourced schools by checking in with students and directing those in need to human support. Alongside’s starting price is about $10 per student annually, with volume discounts for larger districts.

Still, she cautioned against using AI as a substitute for licensed counselors. While large language models can be trained to detect textual signs of distress, they cannot interpret tone of voice, body language, or subtle behavioral cues. “You cannot replace human connection and human judgment,” she said.

Alongside representatives maintain that the platform is not intended to replace therapy but to encourage students to seek adult help. Ava Shropshire, a youth advisor for the company and a junior at the University of Washington, said the app helps normalize conversations about mental health and may serve as a stepping stone to human support.

Privacy concerns also remain. Experts warn that chatbot conversations generally do not carry the same confidentiality protections as sessions with licensed therapists. Even when overseen by clinically trained staff, questions persist about how student data is handled and whether monitoring could lead to disciplinary consequences.

Phillips, the counselor in Putnam County, stressed that human oversight is essential. She said the AI tool provides more flexibility than other monitoring systems that often direct students toward disciplinary action rather than mental health support.

As of February this school year, the system generated 19 “serious” alerts among 393 active users. Some students triggered multiple alerts. Phillips has learned that human judgment is necessary to interpret teenage humor and test behavior. Middle school boys, she said, sometimes type statements such as “my uncle touches me” or “my mom beats me with a pole” to see whether adults are paying attention.

When she pulls students aside, Phillips observes their body language to determine whether a comment reflects a genuine concern. If it was a joke, students often apologize. If they show no remorse, she contacts parents. Even in such cases, she believes the system offers more supportive options than other monitoring tools that might remove students from school.

Over time, she said, students have come to believe that adults are actively monitoring the system — and the number of those testing its limits has declined each year.

Share

Applying to KAUST - Your Complete Guide for Masters & Ph.D. Programs (Upcoming Admissions)

Admissions Overview & Key Requirements

An mRNA cancer vaccine may offer long-term protection

A small clinical trial suggests the treatment could help keep pancreatic cancer from returning

Registration Opens for SAF 2025: International STEAM Azerbaijan Festival Welcomes Global Youth

The International STEAM Azerbaijan Festival (SAF) has officially opened registration for its 2025 edition!

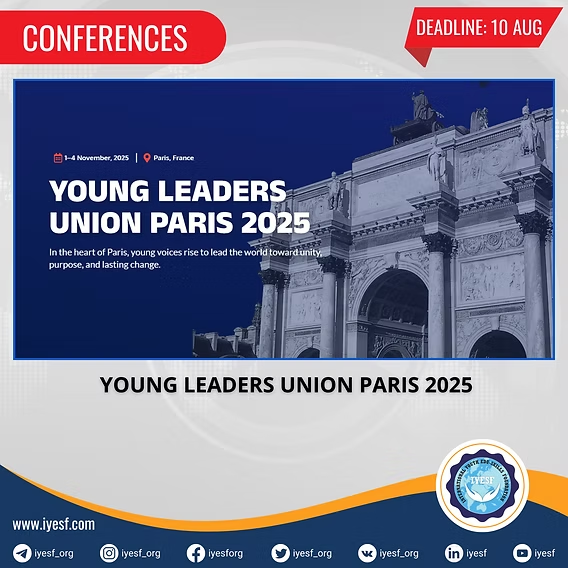

Young Leaders Union Conference 2025 in Paris (Fully Funded)

Join Global Changemakers in Paris! Fully Funded International Conference for Students, Professionals, and Social Leaders from All Nationalities and Fields

Yer yürəsinin daxili nüvəsində struktur dəyişiklikləri aşkar edilib

bu nəzəriyyənin doğru olmadığı məlum olub. Seismik dalğalar vasitəsilə aparılan tədqiqatda daxili nüvənin səthindəki dəyişikliklərə dair qeyri-adi məlumatlar əldə edilib.

Lester B Pearson Scholarship 2026 in Canada (Fully Funded)

Applications are now open for the Lester B Pearson Scholarship 2026 at the University of Toronto!